The Fake News that Swept Social Media After Bondi Beach Terror Attack

Syrian Immigrant Hero's Identity Manipulated by AI-Generated Fake Articles

- •The identity of a Sydney terror attack hero was manipulated through AI-generated fake articles that spread on social media.

- •False information transformed a Syrian immigrant into a Western-named white person, which was even repeated by X's AI.

- •A fake news site hastily created on the day of the attack spread faster than fact-checks, causing information chaos.

15 Dead in Terror Attack, and a Distorted Hero

On December 14, a shooting terror attack occurred at Bondi Beach in Sydney, Australia, targeting a Jewish Hanukkah celebration. Two gunmen opened fire, killing at least 15 people and injuring dozens. Local police officially declared it a terrorist attack.

Amid the chaos, footage emerged of a man disarming one of the gunmen's rifles with his bare hands. He was Ahmed al Ahmed, a Syrian immigrant and fruit shop owner. His courage was verified by authorities and major news outlets and spread worldwide.

But simultaneously, a completely different story began spreading across the internet.

The Birth of an AI-Generated Fake Article

Hours after the attack, an article appeared on a website called 'The Daily,' impersonating Australian media. Written by 'senior crime correspondent Rebecca Chen,' the article identified the hero as Edward Crabtree.

The article described him as a 43-year-old IT professional and claimed to be an 'exclusive interview' conducted from his hospital bed. It included detailed narrative such as: "He left his Bondi apartment Saturday afternoon for his usual beach walk, never imagining he would come face-to-face with an armed terrorist."

This false information spread rapidly through social media, and even X's AI chatbot Grok repeated this misinformation. When users asked "who was the hero," Grok provided Crabtree's name as the answer.

Fact-Checkers Uncover the Manipulation

Belgian public broadcaster RTBF's fact-checking team 'Fakey' investigated 'The Daily' website and found several telltale signs:

- The reporter's profile photo changed with each page refresh. This is a typical characteristic of AI-generated images.

- Domain registration information revealed the URL was created on the day of the attack. This was confirmed through Whois domain lookup services.

- Domain owner information was hidden behind a privacy service in Reykjavik.

All this evidence suggests 'The Daily' was a hastily created fake news site utilizing AI. The site, mimicking a real news organization, exploited the chaos immediately following the terror attack to spread disinformation.

Grok Judges Even Verified Video as Fake

The problem wasn't limited to the hero's name. German public broadcaster ZDF's fact-checking team tracked another error made by Grok.

When users questioned the authenticity of verified footage, Grok responded: "This appears to be an old viral video of a man trimming palm trees in a parking lot who dropped a branch damaging a vehicle. There is no verified location, date, or injury information. It may be staged, and authenticity is uncertain."

This footage had been verified by multiple news organizations and officially confirmed by authorities. Yet the AI judged it as fake.

Why Does This Keep Happening?

This incident reveals fundamental limitations of Large Language Models (LLMs). AI lacks the ability to verify information in real-time. It simply regards the most frequently mentioned content online as 'fact.'

Amid the chaos following the terror attack, as AI-generated fake articles spread rapidly, Grok accepted them as 'majority opinion.' Falsehoods that spread more widely than verified facts appeared as truth to the AI.

This incident also demonstrates the political nature of identity manipulation. While the actual hero was a Syrian immigrant, the fake article transformed him into a Western-named white IT professional. Someone likely intended to erase the heroic actions of an immigrant.

Information Warfare in the Social Media Era

Maria Flannery, a reporter for the European Broadcasting Union (EBU) Spotlight Network, noted that this incident shows "how quickly misinformation spreads during crisis situations."

Within hours of the terror attack, AI-generated articles were created, spread through social media, and even repeated by AI chatbots in a vicious cycle. Before traditional media could complete fact-checking, millions had already been exposed to false information.

Future Outlook [AI Analysis]

Such incidents are likely to become more sophisticated. As generative AI technology advances, the cost of creating fake news sites will decrease further. Everything from domain registration to article writing and image generation can be automated.

Simultaneously, AI chatbot credibility issues will persist. AI tools like Grok are perceived by users as 'search engine alternatives,' but their real-time fact-verification capabilities remain inadequate. This could cause greater confusion during crisis situations.

Ultimately, digital literacy education will become increasingly important. Basic information verification skills such as source checking, cross-verification, and primary source tracking will become essential at the individual level. Platform-level regulation will likely strengthen, but keeping pace with technological development will prove challenging.

댓글 (2)

너무 슬픈 소식이네요. 피해자 분들과 가족에게 위로를 보냅니다.

Fake 소식 정말 안타깝습니다. 유가족분들께 깊은 위로를 전합니다.

More in Special

The Corsican Mafia Exposed: Breaking a Century of Silence

AI-Generated Fake Person Used to Sell Dubai Flight Seats

Facebook Groups Traded Endangered Species Using Code Language — Indonesian Broker Network Exposed

G6 Alliance Declares Support for Hormuz Strait Security... Reverses Stance Under Trump Pressure

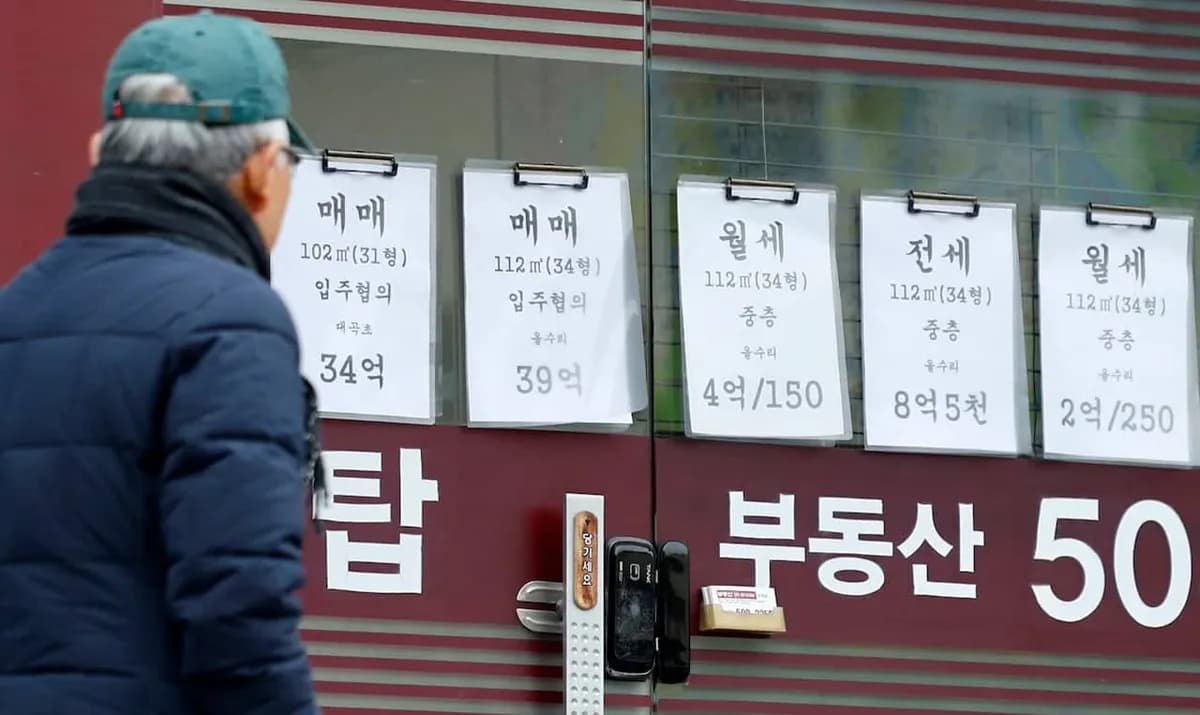

Seoul's Average Monthly Rent Surpasses 1.51 Million Won as Soaring Official Property Prices Trigger 'Housing Cost Bomb'

Rihanna Tops Spotify Without New Album for 10 Years, Proving Power of Catalog

Latest News

이스라엘, 헤즈볼라 무기 통로 레바논 다리 공습

이스라엘군, 헤즈볼라 무기 통로 레바논 다리 공습

중동행 전세기 전쟁보험료 최고 7천500만원

중동행 전세기 전쟁보험료가 최고 5만달러(7천500만원)로 상승

이란 탄도미사일, 이스라엘 방어망 뚫고 160명 부상

이란 탄도미사일이 이스라엘 방공망을 통과해 160명 부상

Middle East Conflict Drives Manufacturing Outlook to 10-Month Low

The Korea Institute for Industrial Economics & Trade survey shows April manufacturing outlook PSI plummeted to 88, falling below baseline for the first time in 10 months.

Lee Jae-myung Administration Excludes Multi-Home Officials from Real Estate Policymaking

President Lee Jae-myung has ordered the exclusion of multi-home owning public officials from all real estate policy processes.

Southeast Asia Growth Forecasts Cut Amid Oil Price Surge, Threatening Korean Exports

Maybank Research has downgraded ASEAN-6's 2026 growth forecast from 4.8% to 4.5%.

Volkswagen CEO Says Germany Should Learn from China's Industrial Strategy

Volkswagen CEO stated that Germany should learn from China's systematic industrial planning approach.

Reddit Considers Face ID to Block Bots While Maintaining Anonymity

Reddit is considering implementing biometric authentication systems such as Face ID and Touch ID to block AI bots while maintaining anonymity.