NVIDIA Releases Multilingual OCR Model Built with Synthetic Data

Nemotron OCR v2, trained on 12 million synthetic images, cuts non-English recognition error rates by up to 94%

- •NVIDIA released Nemotron OCR v2, trained on 12 million synthetic images across six languages.

- •Non-English NED error rates dropped from 0.56–0.92 to 0.035–0.069, an improvement of up to 94%.

- •The model processes 34.7 pages per second on a single A100 GPU; both the dataset and model are open-source.

NVIDIA Unveils 'Nemotron OCR v2' Multilingual OCR Model

NVIDIA has released Nemotron OCR v2, a multilingual Optical Character Recognition (OCR) model built on synthetic data. Trained on 12 million synthetic images spanning six languages, the model achieves 34.7 pages per second on a single A100 GPU. Normalized Edit Distance (NED) scores for non-English languages improved dramatically from 0.56–0.92 to 0.035–0.069. The dataset is available at nvidia/OCR-Synthetic-Multilingual-v1 and the model at nvidia/nemotron-ocr-v2 on Hugging Face.

Why It Matters: Synthetic Data Breaks the OCR Data Bottleneck

The central barrier to OCR model development has always been data. High-quality training requires image-text pairs annotated with precise bounding boxes at the word, line, and paragraph level, along with reading order information. Doing this manually at millions-of-images scale is neither economically nor practically feasible.

Existing benchmark datasets like ICDAR and Total-Text offer clean labels but are limited in scale—typically tens of thousands of images skewed toward English and Chinese. Web-scraped PDFs provide volume, but their text layers are often incomplete or contaminated with low-quality OCR outputs.

Synthetic data resolves both limitations simultaneously. By rendering text onto images programmatically, every bounding box, transcription, and reading order relationship is known exactly. The key challenge is realism—sufficient diversity across fonts, colors, backgrounds, layouts, and augmentations is required for the model to generalize to real-world documents.

What Changed: v1 vs. v2

| Item | Nemotron OCR v1 | Nemotron OCR v2 | Change |

|---|---|---|---|

| Language support | English-centric | 6 languages (EN, JA, KO, RU, ZH, etc.) | Expanded to multilingual |

| Character set | 855 characters | 14,244 characters | CJK + Cyrillic included |

| Training data | Limited | 12M synthetic images | Large-scale synthetic |

| Non-English NED | 0.56–0.92 | 0.035–0.069 | Up to 94% improvement |

| Throughput | N/A | 34.7 pages/sec (1× A100) | Shared backbone architecture |

| Architecture | Independent modules | Shared backbone for detection, recognition, relational | Redundant compute eliminated |

The shift from v1 to v2 was fundamentally about solving a data problem, not an architecture problem. NVIDIA's team first attempted expanding the character set to 14,244 without corresponding training data—the gains were marginal. The model could theoretically output the right characters but had never learned what they looked like.

Historical Thread: OCR Meets Synthetic Data

Synthetic data use in Document AI gained momentum in the mid-2010s. DeepMind's SynthText (2016) pioneered scene-text synthesis for detection tasks, with the approach later extending to document understanding. NAVER's SynthDoG (2022) introduced a multilingual document image synthesis pipeline that drew wide attention, though achieving real-world accuracy with synthetic data alone remained difficult at the time.

NVIDIA's release demonstrates that when rendering engine diversity and randomization are sufficiently high, synthetic-only training can produce practically viable multilingual OCR. The explosion of Large Language Models (LLMs) has accelerated this trend—as pipelines feeding extracted document text into LLMs became standard, OCR quality began determining the fate of entire downstream workflows, making multilingual accuracy critical.

[Expert Analysis] Implications and Outlook

Notably, NVIDIA released not just the model but the pipeline itself. The team states the synthetic data pipeline is designed to extend to any language for which fonts and source text exist—a meaningful reduction in barriers for researchers working with lower-resource languages.

On the speed side, 34.7 pages per second on a single A100 is practically viable for enterprise-scale batch document processing. The shared backbone architecture—where detection, recognition, and relational models reuse features—enables this throughput by eliminating redundant computation.

Limitations remain. Handwriting, heavily degraded historical documents, and specialized domain terminology represent distributions difficult to cover adequately with synthetic data. NED improvements aside, real-world performance on specific business document types warrants further domain-specific evaluation.

Nemotron OCR v2 is likely to see broad adoption in enterprise document processing, RAG (Retrieval-Augmented Generation) preprocessing pipelines, and multilingual digital archive construction. Whether the open-source release catalyzes community-driven expansion to additional languages will be worth watching.

댓글 (19)

흥미로운 주제입니다. NVIDIA이 앞으로 어떻게 전개될지 주목해야겠습니다. 좋은 기사 감사합니다.

Releases에 대해 더 알고 싶어졌습니다.

Multilingual 관련 해외 동향도 궁금합니다.

Nemotron-OCR 관련 데이터가 인상적이었습니다.

OCR 관련 데이터가 인상적이었습니다.

북마크해두겠습니다. NVIDIA이 앞으로 어떻게 전개될지 주목해야겠습니다. 주변에도 공유해야겠어요.

참고가 됩니다. Releases 주제로 시리즈 기사가 나오면 좋겠습니다. 좋은 기사 감사합니다.

Multilingual에 대한 다른 매체 보도와 비교해봐도 잘 정리되어 있습니다.

읽기 좋은 기사입니다. Nemotron-OCR에 대해 주변 사람들과 이야기 나눠볼 만합니다.

흥미로운 주제입니다. OCR의 전문가 코멘트가 설득력 있었습니다. 주변에도 공유해야겠어요.

이런 시각도 있었군요. NVIDIA에 대해 주변 사람들과 이야기 나눠볼 만합니다.

Releases의 전문가 코멘트가 설득력 있었습니다.

댓글 보는 재미도 있네요.

Nemotron-OCR이 앞으로 어떻게 전개될지 주목해야겠습니다. 나중에 다시 읽어볼 만합니다.

좋은 정보 감사합니다.

NVIDIA 관련 배경 설명이 이해하기 쉬웠습니다.

깔끔한 기사입니다. Releases 관련 해외 동향도 궁금합니다.

깔끔한 기사입니다. Multilingual의 전문가 코멘트가 설득력 있었습니다.

흥미로운 주제입니다. Nemotron-OCR 관련 해외 동향도 궁금합니다. 전문가 의견도 더 듣고 싶습니다.

More in this series

More in AI & Tech

Latest News

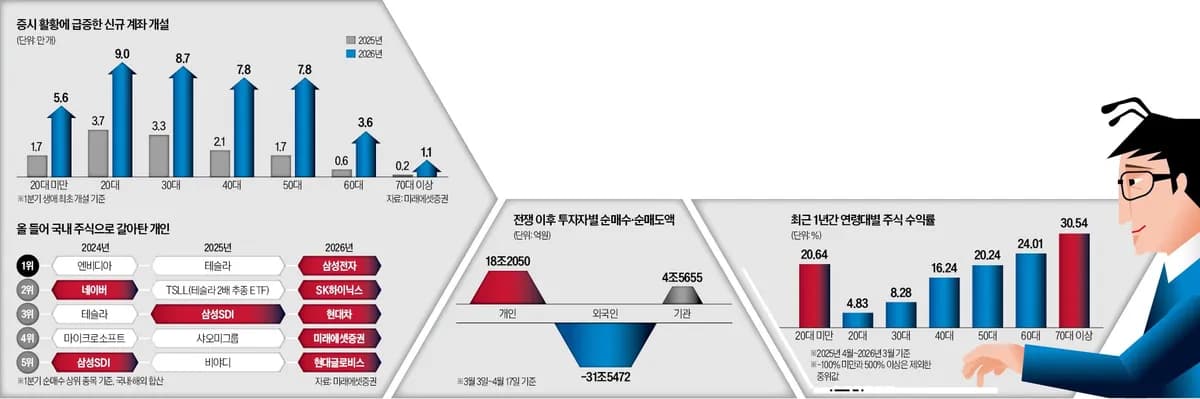

Buy in Fear, Sell in Greed — Retail Investors Credited for Defending KOSPI 5000

Donghak Ants absorb foreign selloffs, playing a key role in defending KOSPI 5000

중국 스마트폰 시장 침체 속 애플 아이폰 출하 20% 급증

애플 아이폰의 중국 1분기 출하량이 전년 대비 20% 급증해 주요 업체 중 최고 성장률을 기록했다.

이란 전쟁發 에너지 위기, EU 스태그플레이션 경계선에 서다

IMF가 이란 전쟁發 에너지 위기로 EU 경기침체 가능성을 경고했다.

ICE Acting Director Todd Lyons to Resign at End of May, DHS Confirms

ICE Acting Director Todd Lyons officially set to resign at end of May, per DHS

Trump Maintains Naval Blockade as Iran Declares Full Opening of Strait of Hormuz

Trump reaffirms naval blockade on Iran, says Israel will not strike Lebanon again

호르무즈 봉쇄가 바꾼 에너지 지도, 재생에너지 전환 가속

호르무즈 해협 봉쇄로 하루 1,300만 배럴 원유 공급이 차질을 빚으며 유가가 급등했다.

호르무즈 재개방 선언에도 파나마 운하 적체 해소 '요원'

이란이 호르무즈 해협 완전 개방을 선언했지만 미 해군 봉쇄는 유지됐다.

호르무즈 해협 재개방에 금값 급등·유가 폭락

이란의 호르무즈 해협 재개방 선언에 금값이 3월 이후 최고치로 상승했다.