The Paradox of the AI Era: Surging Power Consumption and the Crossroads of Clean Energy Transition

MIT Researchers Warn 'Data Center Power Demand Could Reach 15% of Total U.S. Electricity by 2030'

- •AI computing center power demand is surging and projected to account for 12-15% of total U.S. electricity by 2030.

- •A single ChatGPT conversation consumes power equivalent to charging a smartphone once, with AI model maintenance power doubling every 3 months.

- •MIT researchers propose solving the problem through improved AI efficiency, clean energy transition, and AI-driven energy system innovation.

The Scale of the Power Crisis Triggered by AI

The explosive growth of artificial intelligence (AI) computing centers is placing unprecedented strain on power grids. At the annual symposium "AI and Energy: Crisis and Opportunity" held by the MIT Energy Initiative (MITEI) on May 13, the energy dilemma brought on by AI and potential solutions were the focus of intensive discussion.

Data centers currently consuming approximately 4% of total U.S. electricity are projected to surge to 12-15% by 2030 according to some forecasts. After decades of stagnation, U.S. power demand has shifted into a rapid growth phase due to AI.

Vijay Gadepally, senior research scientist at MIT Lincoln Laboratory, explained that "the power required to maintain large-scale AI models is increasing by nearly 2x every 3 months," adding that "a single conversation with ChatGPT consumes as much power as charging a mobile phone, and generating a single image requires about a bottle of water for cooling."

Currently, AI data centers with capacities of 50-100 megawatts (MW) are rapidly emerging worldwide. OpenAI CEO Sam Altman emphasized in congressional testimony that "the cost of AI, ultimately the cost of intelligence, will converge to the cost of energy."

Why This Problem Matters Now

William H. Green, director of MITEI and professor of chemical engineering at MIT, stated, "We stand at the precipice of enormous change across the economy," adding that "we must simultaneously solve two challenges: localized power supply issues and achieving clean energy goals."

The surge in AI energy demand is not merely a matter of power grid burden. It directly conflicts with the global goal of climate change response. With many countries and corporations having set carbon neutrality targets, AI computing threatens to increase dependence on fossil fuel-based electricity.

At the same time, AI technology holds the potential to revolutionize energy systems themselves. Experts point out that it can be utilized as a tool to accelerate clean energy transition through power grid optimization, renewable energy forecasting, and energy storage technology development.

Data Center Energy Demand: Past vs. Present Comparison

| Category | Pre-2020 | Current 2025 | 2030 Projection |

|---|---|---|---|

| Share of Total U.S. Electricity | ~2% | 4% | 12-15% (forecast) |

| Power Demand Trend | Stagnant for decades | Rapid growth beginning | Continued rise |

| Large-scale Center Power Capacity | 10-20 MW | 50-100 MW | 100+ MW |

| AI Model Power Growth Rate | - | 2x every 3 months | - |

| Single Task Power Consumption | - | 1 ChatGPT conversation = 1 smartphone charge | - |

While data centers previously focused primarily on cloud storage and web services, they now dedicate massive computing resources to large language model (LLM) training and inference. The democratization of generative AI services like ChatGPT and Gemini has simultaneously exploded demand from both individual users and institutional research.

Historical Context of AI Energy Issues

Computing energy consumption is not a new problem. Debates over data center efficiency began in the early 2000s, and during the 2010s cloud computing era, efficiency metrics like PUE (Power Usage Effectiveness) became industry standards.

However, everything changed after ChatGPT's launch in 2022. The democratization of generative AI led to:

- 2022-2023: Explosion of consumer-facing AI services like ChatGPT and Stable Diffusion

- 2023-2024: Corporate AI adoption race, rapid increase in LLM training scale

- 2024-2025: Intensified computing load from multimodal AI and real-time inference models

- 2025-Present: Facing energy supply limits, collision with clean energy goals

Particularly over the past three years, as AI model parameters and training data have grown exponentially, energy consumption has exploded alongside. The "2x every 3 months" growth rate presented by MIT Lincoln Laboratory far exceeds Moore's Law.

Industry and Academic Response Directions

The symposium discussed multilayered solutions to AI energy challenges.

1. Demand-Side Optimization

In a panel featuring IBM's Dustin Demetriou, Carnegie Mellon University's Emma Strubell, and MIT Lincoln Laboratory's Gadepally, improving AI model efficiency was identified as a core challenge. This includes reducing unnecessary computations, applying model compression techniques, and optimizing energy use during the inference stage.

2. Supply-Side Clean Energy Transition

Constellation Energy's Strategy Manager Kathryn Biegel emphasized that "for data centers to achieve clean energy goals, renewable energy power purchase agreements (PPAs), nuclear power utilization, and energy storage system deployment are essential."

3. Energy System Innovation Through AI

Paradoxically, AI can also be part of the solution to energy problems. AI is already being utilized in power grid demand forecasting, renewable energy generation optimization, and battery storage system management, and through this can accelerate clean energy transition.

What Lies Ahead [AI Analysis]

AI energy issues are likely to become a central agenda for the technology industry and energy policy over the next five years.

In the short term, existing power grid capacity limits may delay data center construction or concentrate locations in areas with abundant power supply (e.g., near nuclear plants, renewable energy-dense regions). Some countries and regions may introduce regulations on data center energy consumption.

In the medium term, improvements in AI model efficiency will become a critical inflection point. As awareness spreads that the current "2x every 3 months" growth rate is unsustainable, model compression, computational optimization, and dedicated hardware (NPU, TPU) development are expected to accelerate.

In the long term, AI is likely to become a key tool for clean energy transition. AI can drive innovation in power grid management, renewable energy forecasting, fusion research, and battery material development, improving overall energy system efficiency.

However, all these scenarios are only possible when policy commitment and technological innovation occur simultaneously. As MITEI Director Green emphasized, finding the balance point to "capture AI's benefits while minimizing harm" will be the core challenge of 21st-century energy transition.

댓글 (5)

The에 대해 더 알고 싶어졌습니다. 후속 기사 부탁드립니다.

간결하면서도 핵심을 잘 정리한 기사네요.

그 부분은 저도 궁금했습니다.

기사 잘 봤습니다. 다른 시각의 분석도 읽어보고 싶네요.

공감합니다. 참고하겠습니다.

More in AI & Tech

Reddit Considers Face ID to Block Bots While Maintaining Anonymity

China Reduces Hypersonic Missile Core Technology Simulation to 7 Days

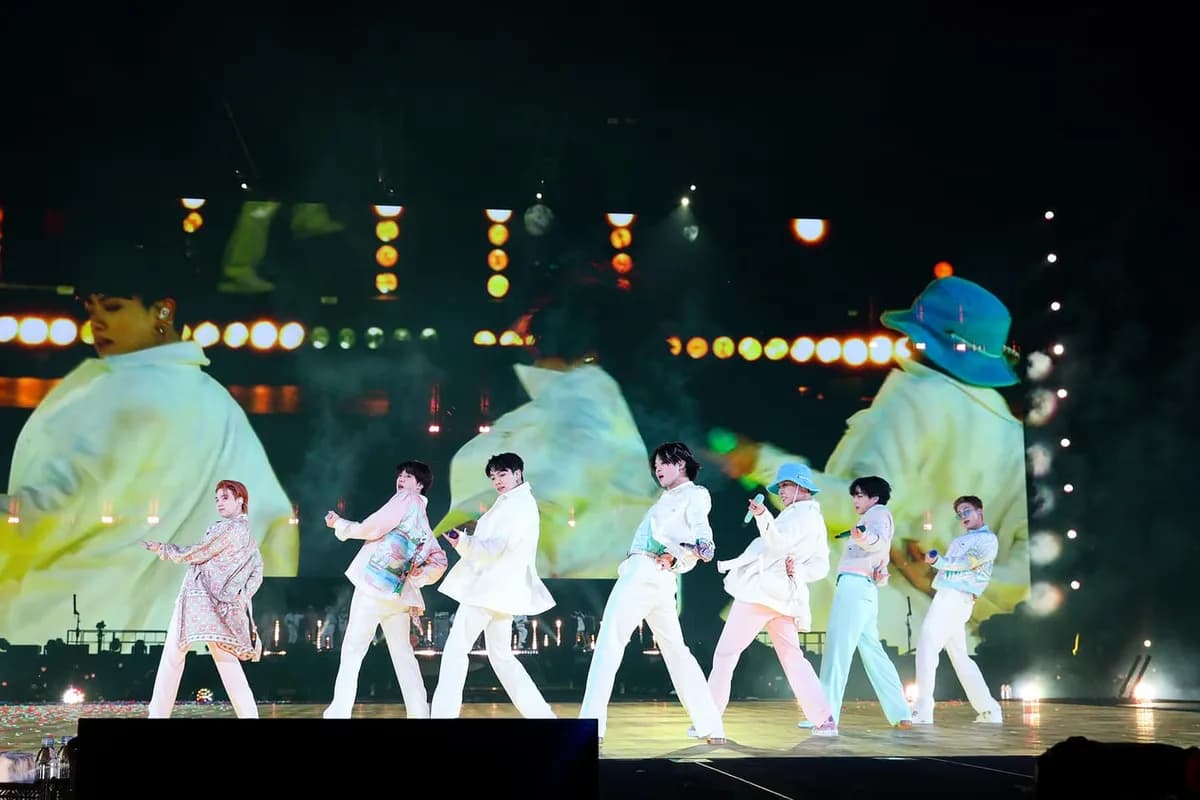

BTS Gwanghwamun Concert: AI Network Prevents Communication Crisis for 40,000 Fans

Czech Drone Factory Fire Under Investigation for Terrorism

Trump Slams NATO Allies as 'Cowards' Over Strait of Hormuz Refusal

Google Unveils Gemini 3.1 Flash-Lite Optimized for High-Volume Processing

Latest News

이스라엘, 헤즈볼라 무기 통로 레바논 다리 공습

이스라엘군, 헤즈볼라 무기 통로 레바논 다리 공습

중동행 전세기 전쟁보험료 최고 7천500만원

중동행 전세기 전쟁보험료가 최고 5만달러(7천500만원)로 상승

이란 탄도미사일, 이스라엘 방어망 뚫고 160명 부상

이란 탄도미사일이 이스라엘 방공망을 통과해 160명 부상

Middle East Conflict Drives Manufacturing Outlook to 10-Month Low

The Korea Institute for Industrial Economics & Trade survey shows April manufacturing outlook PSI plummeted to 88, falling below baseline for the first time in 10 months.

Lee Jae-myung Administration Excludes Multi-Home Officials from Real Estate Policymaking

President Lee Jae-myung has ordered the exclusion of multi-home owning public officials from all real estate policy processes.

Southeast Asia Growth Forecasts Cut Amid Oil Price Surge, Threatening Korean Exports

Maybank Research has downgraded ASEAN-6's 2026 growth forecast from 4.8% to 4.5%.

Volkswagen CEO Says Germany Should Learn from China's Industrial Strategy

Volkswagen CEO stated that Germany should learn from China's systematic industrial planning approach.

BTS Tops March Artist Brand Reputation Rankings with First Full Group Comeback in 4 Years

BTS ranked first in the Korean Corporate Reputation Research Institute's March Artist Brand Reputation Rankings based on 99 million data points.