Children Harass Peers with Deepfakes as Laws Lag Behind

13-year-old boy creates and shares deepfake nudes of classmate... 15% of U.S. K-12 students aware of AI-generated sexual images of peers

- •15% of U.S. K-12 students are aware of AI-generated sexual images of their classmates, with female students far more likely to be targeted.

- •As generative AI technology advances and lowers barriers to deepfake creation, the number of minor perpetrators is rapidly increasing.

- •While all 50 U.S. states have criminalized non-consensual distribution of actual images, most state laws do not apply to AI-generated deepfakes, creating a legal gap.

Wisconsin 13-Year-Old Creates Deepfake Nudes from Bat Mitzvah Photos

In October 2024, a 13-year-old male student in Wisconsin used photos from a female classmate's bat mitzvah (Jewish coming-of-age ceremony) to create deepfake nudes and distributed them via Snapchat. This is not an isolated incident. In recent years, cases of school-age children across the United States using deepfakes to target classmates for pranks or bullying have been recurring.

According to a survey released in September 2025 by the Center for Democracy & Technology (CDT), 15% of U.S. K-12 (kindergarten through 12th grade) students reported being aware of AI-generated sexual images of their classmates. Female students were significantly more likely to be targets of deepfake sexual depictions than male students. CDT concluded that "the issue of non-consensual intimate imagery (NCII), whether real or deepfake, is at a serious level in K-12 public schools."

Why This Matters: Generative AI Has Democratized Deepfakes

When deepfakes first appeared online eight years ago, they were difficult to create. However, advances in generative artificial intelligence (AI) technology have made tools for creating deepfakes easily accessible to anyone. Rebecca Delfino, a law professor at Loyola Marymount University and deepfake researcher, noted that "five or six years ago, there probably weren't this many minors among revenge porn perpetrators."

As the technical barrier to entry has lowered, minors have gained easy access to deepfake apps. Professor Delfino explained, "The behavioral patterns exhibited by minors are not significantly different from the cruelty, humiliation, exploitation, and bullying that existed before," adding that "the difference is the use of technology and how easily the results spread."

Non-consensual intimate imagery (NCII) abuse leaves mental health damage on victims similar to offline sexual violence. Victims are sometimes forced to cease online activities due to image distribution.

Laws Can't Keep Up: Most State Laws Don't Cover AI-Generated Images

Federal and state governments in the United States have enacted laws to address non-consensual intimate imagery abuse. Currently, all 50 states and Washington D.C. have laws criminalizing the non-consensual distribution of actually photographed images. In August 2025, President Donald Trump signed the federal "Take It Down" Act, a similar measure.

However, unlike the federal law, most state laws do not explicitly apply to AI-generated deepfakes. Moreover, laws specifically addressing cases where perpetrators are minors are even rarer. Professor Delfino pointed out that "policymakers want to punish image-based sexual abuse perpetrators 'swiftly and severely,' but often don't consider the perpetrator's age."

Under current law, minor perpetrators are likely to be treated similarly to minors who commit other crimes. Prosecution is possible, but prosecutors and courts determine sentences taking age into account. The problem is that laws are not keeping pace with technological advancement, and there is a particular lack of legal frameworks that simultaneously address both AI-generated content and minor perpetrators.

Comparison: Legal Response to Actual Photos vs. AI-Generated Images

| Category | Actual Photographs | AI-Generated Deepfakes |

|---|---|---|

| Federal Law | Take It Down Act applies (Aug 2025) | Same law applies |

| State Law | Criminalized in all 50 states + DC | Not applicable or unclear in most states |

| Minor Perpetrators | Age considered but prosecution possible | Lack of legal framework, case-by-case judgment |

| Punishment Level | Varies by state (misdemeanor to felony) | Difficult to punish due to state law gaps |

While legal responses to actual photographs have been somewhat systematized, AI-generated deepfakes remain in a legal blind spot. Law enforcement becomes even more complicated when perpetrators are minors.

[AI Analysis] Future Outlook: Triangular Response of Technology, Education, and Law Needed

Deepfake technology is likely to become more sophisticated and accessible in the future. With the spread of open-source generative AI models and the evolution of mobile apps, the barrier to creation will continue to lower. Responding to this requires parallel efforts in three areas.

First, rapid updates to legal frameworks. While federal law includes AI-generated images, state laws are not keeping pace. Legislative guidance is needed to enable states to add deepfake provisions or broadly interpret existing laws. Additionally, separate treatment standards for minor perpetrators (educational intervention, treatment linkage, etc.) must be established.

Second, digital literacy education in schools and homes. Curricula are needed to help children understand the ethical issues and legal responsibilities surrounding deepfakes. The CDT survey revealed the severity of the problem while also showing room for educational intervention.

Third, strengthening platform accountability. Social media platforms like Snapchat and Instagram must enhance AI detection technology and reporting systems to prevent minors from easily creating and sharing deepfakes. App stores also need to strengthen age restrictions and pre-screening for deepfake generation apps.

Image-based sexual abuse remains fundamentally the same regardless of technological changes. The psychological trauma left on victims is no different whether the image is real or AI-synthesized. Whether the next generation's digital environment becomes safer or more dangerous will depend on how seriously and swiftly law and society address this issue.

댓글 (3)

기사 잘 봤습니다. 다른 시각의 분석도 읽어보고 싶네요.

간결하면서도 핵심을 잘 정리한 기사네요.

공감합니다. 참고하겠습니다.

More in AI & Tech

Reddit Considers Face ID to Block Bots While Maintaining Anonymity

China Reduces Hypersonic Missile Core Technology Simulation to 7 Days

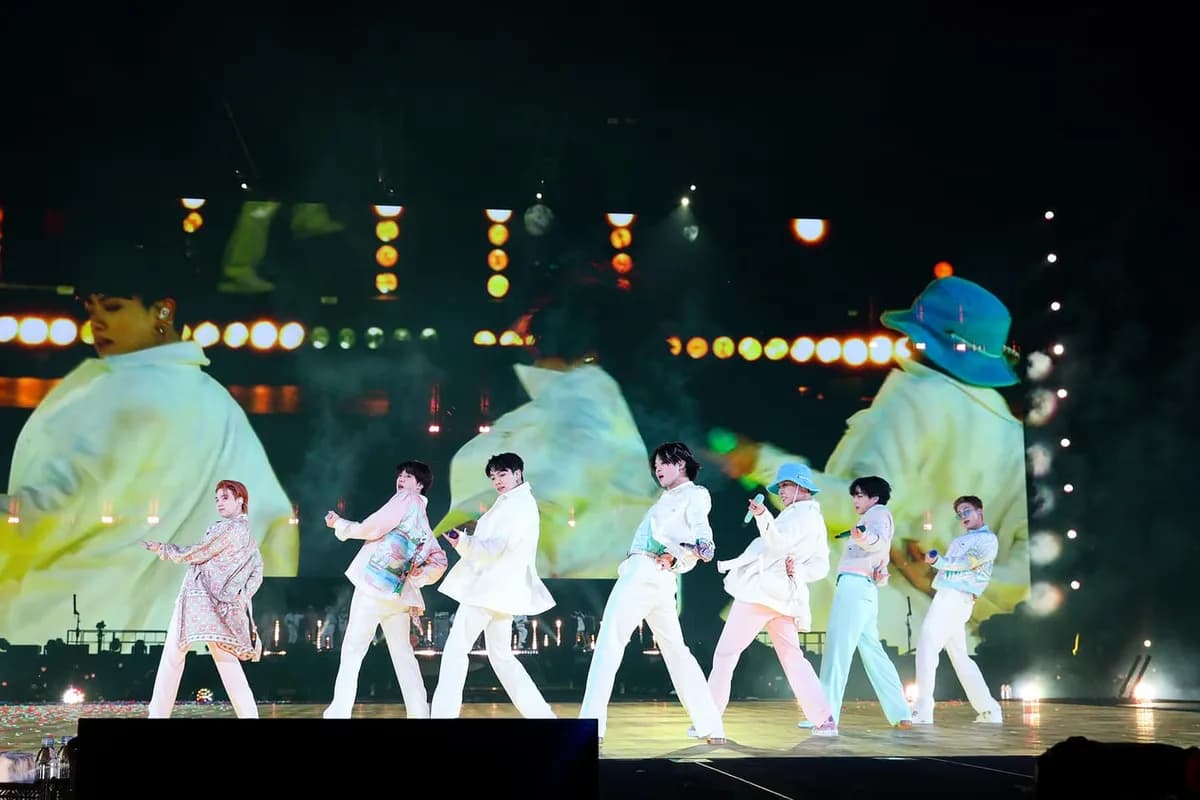

BTS Gwanghwamun Concert: AI Network Prevents Communication Crisis for 40,000 Fans

Czech Drone Factory Fire Under Investigation for Terrorism

Trump Slams NATO Allies as 'Cowards' Over Strait of Hormuz Refusal

Google Unveils Gemini 3.1 Flash-Lite Optimized for High-Volume Processing

Latest News

이스라엘, 헤즈볼라 무기 통로 레바논 다리 공습

이스라엘군, 헤즈볼라 무기 통로 레바논 다리 공습

중동행 전세기 전쟁보험료 최고 7천500만원

중동행 전세기 전쟁보험료가 최고 5만달러(7천500만원)로 상승

이란 탄도미사일, 이스라엘 방어망 뚫고 160명 부상

이란 탄도미사일이 이스라엘 방공망을 통과해 160명 부상

Middle East Conflict Drives Manufacturing Outlook to 10-Month Low

The Korea Institute for Industrial Economics & Trade survey shows April manufacturing outlook PSI plummeted to 88, falling below baseline for the first time in 10 months.

Lee Jae-myung Administration Excludes Multi-Home Officials from Real Estate Policymaking

President Lee Jae-myung has ordered the exclusion of multi-home owning public officials from all real estate policy processes.

Southeast Asia Growth Forecasts Cut Amid Oil Price Surge, Threatening Korean Exports

Maybank Research has downgraded ASEAN-6's 2026 growth forecast from 4.8% to 4.5%.

Volkswagen CEO Says Germany Should Learn from China's Industrial Strategy

Volkswagen CEO stated that Germany should learn from China's systematic industrial planning approach.

BTS Tops March Artist Brand Reputation Rankings with First Full Group Comeback in 4 Years

BTS ranked first in the Korean Corporate Reputation Research Institute's March Artist Brand Reputation Rankings based on 99 million data points.