Apple Confirms Google Gemini Integration for Next-Gen Siri

Cloud AI Partnership Worth $1 Billion Annually to Strengthen Capabilities

- •Apple has officially confirmed integration of Google's Gemini technology into next-generation Siri development, agreeing to pay up to $1 billion annually (approximately 1.4 trillion won).

- •The new Siri will be redesigned with a three-layer architecture—query planner, knowledge retrieval, and summarization—with Gemini playing a core role in the reasoning and summarization layers.

- •Advanced AI features including voice-based app control, personal context awareness, and screen content understanding will be progressively released starting with iOS 26.4 in spring 2026.

Apple-Google Formalize AI Strategy Partnership

Apple has officially confirmed the integration of Google's Gemini technology into the development of next-generation Siri. In an interview with CNBC, Apple stated that "internal evaluations determined Google's technology provides the strongest foundation for building cloud-based foundation models." Google also confirmed the collaboration, stating that "Apple's next-generation foundation model will operate on top of Gemini."

Under this partnership, Apple will pay Google up to $1 billion annually (approximately 1.4 trillion won). Apple is adopting a significantly larger, customized Gemini model compared to the current cloud-based Siri, strengthening core functions that require advanced reasoning capabilities and contextual understanding.

Siri Redesigned with Three-Layer Architecture

The new Siri will be built on three core layers:

1. Query Planner — Reasoning Layer Serves as the brain that determines how to process user requests. It decides whether web search is needed, whether to access personal data, or whether to interact with apps through App Intents. Gemini will play a central role in this component.

2. Knowledge Retrieval System A knowledge-based system capable of answering general questions without relying on external services.

3. Summarization System Handles core Apple Intelligence features including notification summaries, webpage summaries, and long-text summarization. Gemini is expected to play a major role here as well.

Apple has adopted a hybrid strategy, maintaining on-device processing with proprietary models while leveraging Gemini for advanced cloud-based tasks.

New Features Coming in iOS 26.4

Apple plans to introduce the following features in the iOS 26.4 update, scheduled for spring 2026:

- Voice-based in-app task execution: Siri directly controls functions within apps

- Enhanced personal context awareness: More accurate responses tailored to user situations

- Screen content understanding: Recognition of on-screen content to perform complex tasks

CEO Tim Cook stated during a recent earnings call that "development of the new Siri is progressing solidly and we're maintaining our 2026 launch timeline." He also indicated that "we will integrate more AI models in the future," suggesting Apple is building an open architecture capable of partnering with various models beyond ChatGPT and Gemini.

[AI Analysis] Reshaping Big Tech AI Competition Landscape

Apple's Strategic Choice

Behind Apple's decision to partner with Google rather than develop proprietary AI lies a matter of time. Having fallen behind in the generative AI race, Apple needed proven technology for its planned 2026 launch, and Gemini met those requirements.

This represents a departure from Apple's "Not Invented Here" tradition. For a company that has built its philosophy on hardware-software integration to outsource the brain of Siri, a core feature, indicates that in the AI era, speed and performance take precedence over pride.

Google's Strategic Victory

Google gains two things from this contract:

- Stable revenue stream worth $1 billion annually: Another revenue source following the $20 billion annual payment Google receives from Apple for default search placement

- Entry into iPhone ecosystem: Gemini will be involved in the daily AI experiences of 1.5 billion iPhone users

However, Apple maintains on-device processing with proprietary models, using Gemini only for advanced cloud tasks. This is not complete dependence.

Beginning of Multi-Model Strategy

Apple's mention of standards-based architecture like Model Context Protocol (MCP) is also noteworthy. This opens the possibility for Siri to evolve into a structure that can selectively use ChatGPT, Gemini, proprietary models, and others depending on the situation.

Following OpenAI's ChatGPT integration into Siri in late 2024, and now with Gemini's addition, Apple appears to be pursuing a strategy to position itself as a "model-agnostic platform." This is a practical choice to maintain peak performance while avoiding dependence on any specific AI model.

Future Outlook

With the iOS 26.4 release, the actual performance of the new Siri will reveal whether Apple's choice was sound. The query planner's reasoning capabilities and personal context understanding will be key evaluation metrics.

Whether Apple will pursue parallel development of large-scale proprietary models or pivot completely toward a multi-model integration platform is another point to watch. 2026 will be the true testing ground for Apple's AI strategy.

댓글 (3)

간결하면서도 핵심을 잘 정리한 기사네요.

기사 잘 봤습니다. 다른 시각의 분석도 읽어보고 싶네요.

공감합니다. 참고하겠습니다.

More in this series

More in AI & Tech

Reddit Considers Face ID to Block Bots While Maintaining Anonymity

China Reduces Hypersonic Missile Core Technology Simulation to 7 Days

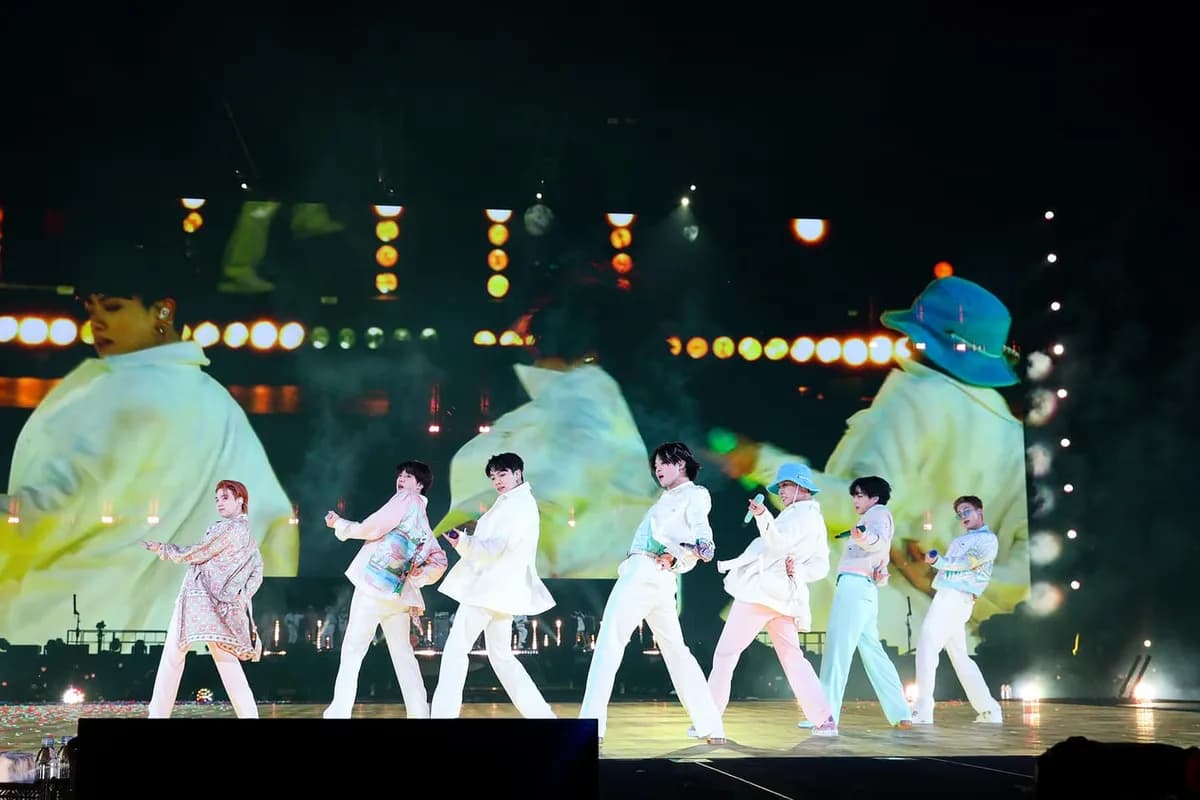

BTS Gwanghwamun Concert: AI Network Prevents Communication Crisis for 40,000 Fans

Czech Drone Factory Fire Under Investigation for Terrorism

Trump Slams NATO Allies as 'Cowards' Over Strait of Hormuz Refusal

Google Unveils Gemini 3.1 Flash-Lite Optimized for High-Volume Processing

Latest News

이스라엘, 헤즈볼라 무기 통로 레바논 다리 공습

이스라엘군, 헤즈볼라 무기 통로 레바논 다리 공습

중동행 전세기 전쟁보험료 최고 7천500만원

중동행 전세기 전쟁보험료가 최고 5만달러(7천500만원)로 상승

이란 탄도미사일, 이스라엘 방어망 뚫고 160명 부상

이란 탄도미사일이 이스라엘 방공망을 통과해 160명 부상

Middle East Conflict Drives Manufacturing Outlook to 10-Month Low

The Korea Institute for Industrial Economics & Trade survey shows April manufacturing outlook PSI plummeted to 88, falling below baseline for the first time in 10 months.

Lee Jae-myung Administration Excludes Multi-Home Officials from Real Estate Policymaking

President Lee Jae-myung has ordered the exclusion of multi-home owning public officials from all real estate policy processes.

Southeast Asia Growth Forecasts Cut Amid Oil Price Surge, Threatening Korean Exports

Maybank Research has downgraded ASEAN-6's 2026 growth forecast from 4.8% to 4.5%.

Volkswagen CEO Says Germany Should Learn from China's Industrial Strategy

Volkswagen CEO stated that Germany should learn from China's systematic industrial planning approach.

BTS Tops March Artist Brand Reputation Rankings with First Full Group Comeback in 4 Years

BTS ranked first in the Korean Corporate Reputation Research Institute's March Artist Brand Reputation Rankings based on 99 million data points.