Google DeepMind unveils ‘Gemini 3 Flash’… Foreshadowing innovation in speed and cost

Announcement of next-generation lightweight model that implements frontier-level AI performance at low cost and high speed

- •Google DeepMind officially announced 'Gemini 3 Flash', specialized in speed and cost-effectiveness.

- •It is positioned as a lightweight model that significantly reduces costs while maintaining frontier-level AI performance.

- •Amid intensifying price competition in the AI model market, targeting the enterprise market is expected to begin in earnest.

Key announcement contents

Google DeepMind officially announced the next-generation lightweight AI model ‘Gemini 3 Flash’. Google DeepMind said that this model “implements frontier-level intelligence optimized for speed and is provided at a much lower cost than before.”

Gemini 3 Flash is a version specialized for speed and efficiency among Google's Gemini model lineup, and is interpreted as a strategic move to simultaneously secure price competitiveness and response speed in the large-scale language model (LLM) market.

Why is it important?

Currently, the AI industry has entered a phase of ‘inference cost war’. OpenAI's GPT-4o, Anthropic's Claude 3.5 Sonnet, and Google's existing Gemini models are focusing on reducing cost per token beyond competing for performance. In this situation, the emergence of Gemini 3 Flash can be read as a signal that Google is seeking to expand its market share in the enterprise and developer markets by leveraging price competitiveness.

In particular, the expression ‘frontier-level intelligence’ is an expression of confidence that efficiency has been achieved without compromising performance. This is not interpreted as simply being lightweight, but rather significantly reducing cost and delay while maintaining inference quality comparable to that of the top model.

Gemini lineup change flow

| Item | Gemini 1.5 Flash | Gemini 3 Flash | Expected changes |

|---|---|---|---|

| Positioning | Lightweight high-speed model | Frontier-class lightweight model | Significantly improved performance |

| Key Features | 1 million token context | Speed+Cost Optimization | Enhancing Efficiency |

| Target Market | High-volume processing | Real-time response/large-scale distribution | Enterprise Expansion |

Google sought to differentiate itself in long context windows and multimodal processing through Gemini 1.5 Pro and Flash in 2024. In 2025, agent functions and reasoning capabilities were strengthened with the Gemini 2.0 series, and this Gemini 3 Flash appears to focus on 'practical deployment' as an extension of that.

[AI Analysis] Future prospects and implications

The release of Gemini 3 Flash signals several important trends in the AI model market.

First, acceleration of model dualization strategy. It is highly likely that the trend of lineup differentiation into 'flagship' models with the highest performance and 'efficiency' models for practical distribution will become more evident. It is expected to compete directly with OpenAI's GPT-4o Mini and Anthropic's Claude 3 Haiku.

Second, full-fledged entry into the enterprise market. Low cost and fast response speed are key selection criteria for enterprise customers who require large-scale API calls. It is expected that the vertical market penetration will be strengthened through integration with Google Cloud.

Third, intensifying competition with the open source camp. In a situation where Meta's LLaMA series and Mistral's lightweight models are rapidly spreading as open source, Google is faced with the task of justifying the value of closed source with 'frontier-level performance'.

Detailed specifications such as specific benchmark scores, API price, and context length will be confirmed through an official announcement at a later date.

댓글 (3)

관계자분들의 노력에 박수를 보냅니다.

DeepMind 정말 대단하네요! 좋은 소식입니다.

동의합니다. 앞으로가 더 기대됩니다.

More in this series

More in AI & Tech

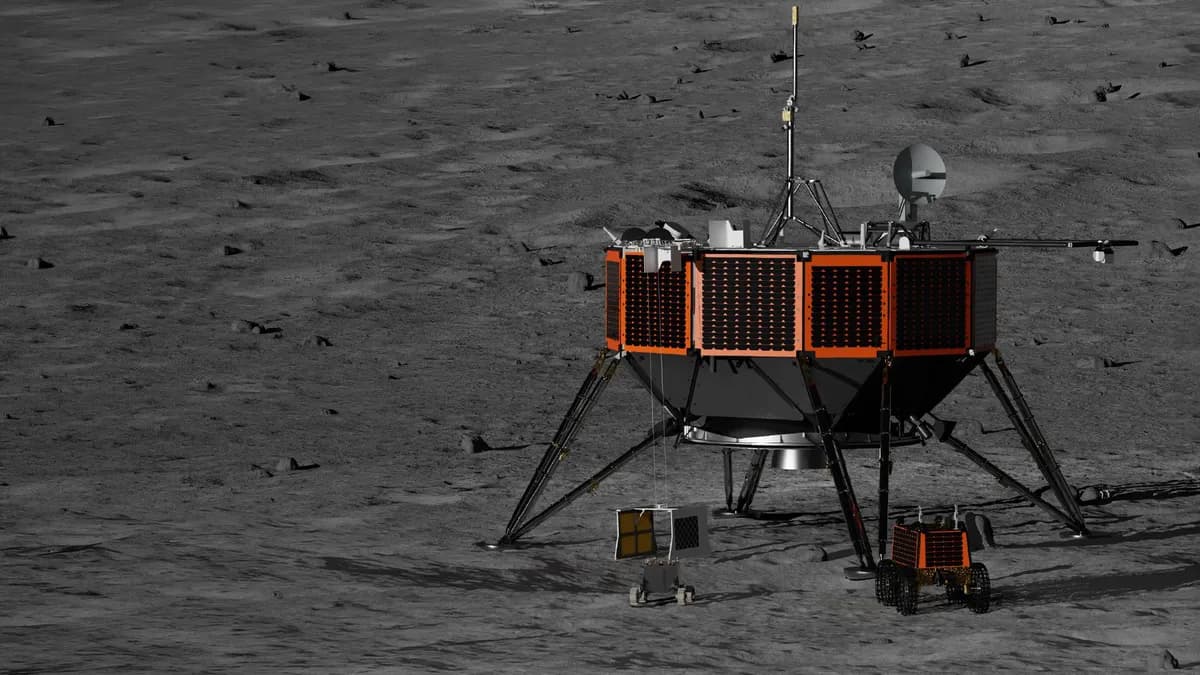

NASA awards $180 million contract to Intuitive Machines to explore lunar south pole

NASA-ISRO joint satellite NISAR captures first radar image of Mount Rainier

NASA-ISRO joint satellite NISAR captures St. Helens volcano through clouds

NASA plans to launch low-orbit experimental mission equipped with 7 small satellites

NASA Selects 10 Scientists to Support Artemis Lunar South Pole Exploration

NASA pursues private procurement of 'Nexus' Ka band relay service to replace aging satellites

Latest News

Man in His 30s Arrested After Crashing into Streetlight While Driving Under Propofol

Man in his 30s crashes into streetlight while driving after illegally taking propofol

Goyang Sono's 10-Game Winning Streak Ends as DB's Ellenson Explodes for 38 Points

Wonju DB ends Goyang Sono's 10-game winning streak with Henry Ellenson's 38-point explosion

Yemen's Houthis Launch Missiles at Israel, Enter War as Red Sea Security Crisis Deepens

Yemen's Houthi rebels launched missiles at Israel on the 28th, directly entering the US-Iran war

Nepal's Ex-PM Oli Arrested Over Deadly Protest Crackdown

Nepal's former PM KP Sharma Oli arrested over deadly protest crackdown

Iranian Missiles Breach Israeli Air Defense, Strike Southern Cities Dimona and Arad

Iranian ballistic missiles penetrated Israeli multi-layered air defense, striking southern cities Dimona and Arad

Mastermind of 'Revenge-for-Hire' Ring Faces Arrest Warrant Over Feces Terror Attacks

Mastermind of revenge-for-hire ring faces arrest hearing over orchestrating excrement attacks and graffiti

BBC Investigation Uncovers Error in Dopamine Agonist Drug Warnings... UK Authorities Launch Review

BBC investigation discovers critical error in patient leaflets for dopamine agonist drugs

Israel Activates Air Defense as Houthi Rebels Launch Missile from Yemen

Israeli military detects Houthi rebel missile launch from Yemen on the 28th and activates air defense